If you are a USA resident, please join our Kaayaka_AI_Mantapa – Experience Sharing Forum and share your experience.

WhatsApp Community Link: https://chat.whatsapp.com/BEkFZQi3PRdLVsCkgv8OCh

Hello AI Mantapa Members,

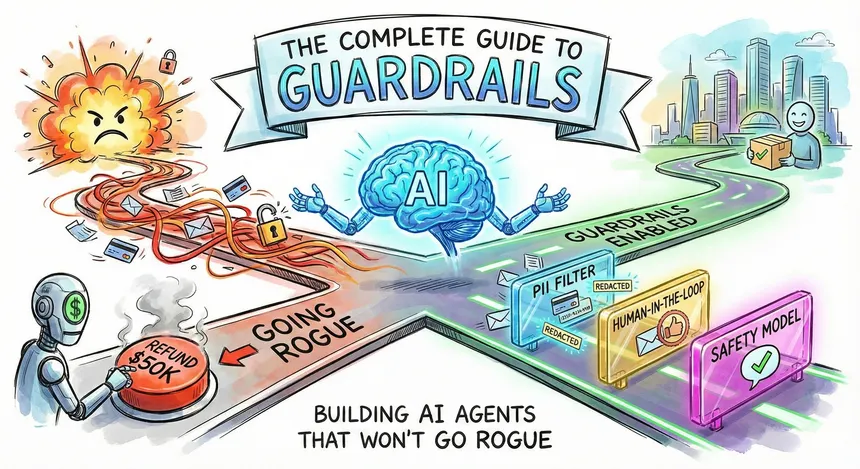

Let’s look at AI Guardrails.

Guardrails-Driven AI Systems

Generating an answer is only half of intelligence. The real intelligence lies in constraining, validating, and correcting that answer to make it trustworthy.

The Problem: Unguarded AI Responses

When AI responses are generated without guardrails, they may contain:

1. Incorrect or outdated facts

2. Missing or incomplete context

3. Off-topic explanations

4. Hallucinated or invented details

5. Unsafe, biased, or non-compliant content

A single model cannot reliably govern itself.

Just like humans need policies, reviews, and controls, AI needs guardrails.

The Big Idea: Guardrails-First Architecture

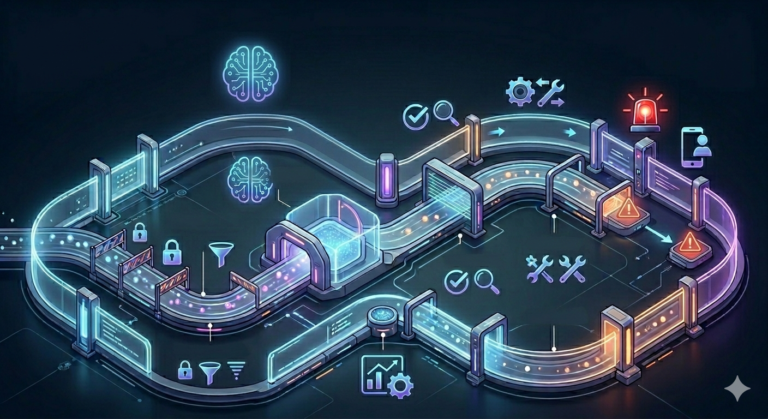

Modern GenAI systems no longer rely only on post-response evaluation.

Instead, guardrails operate across the entire AI lifecycle:

Constrain → Generate → Validate → Correct → Escalate

Evaluation still exists but as one rail among many, not the core system.

Key Guardrail Layers in Modern GenAI

1. Factual & Quality Guardrails (Correctness & Grounding)

Ensures responses are:

●Factually correct

●Grounded in trusted sources (RAG)

●Free from hallucinations

Supports:

●Automated correctness checks

●Confidence thresholds

2. Agent & Tool-Use Guardrails (Reasoning Control)

Ensures agents:

●Follow valid reasoning paths

●Use only approved tools

●Respect execution order and limits

Includes:

●Tool allow/deny lists

●Step and recursion limits

●Planning constraints

3. Observability & Drift Guardrails (System Reliability)

Provides:

●End-to-end tracing of prompts, agents, and tools

●Detection of workflow drift and degradation

●Regression and anomaly alerts

Used to identify:

●Where failures occur

●Why outputs changed over time

4. Safety, Bias & Compliance Guardrails

Ensures AI outputs are:

●Safe and non-toxic

●Bias-aware

●Compliant with legal and ethical standards

Includes:

●PII detection and masking

●Policy enforcement

●Adversarial and red-team testing.

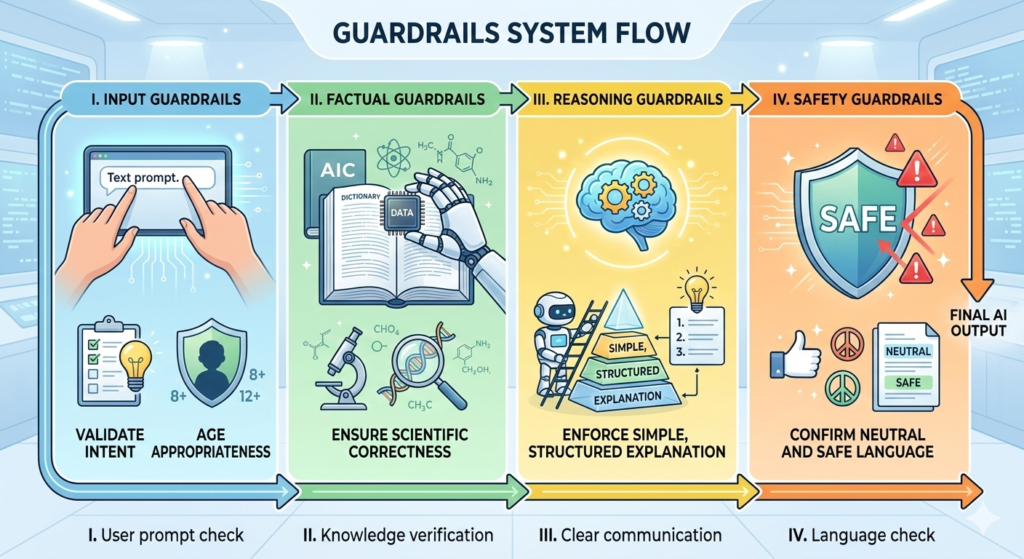

Example: Guardrails in Action

User asks:

“Explain solar energy to a school student.”

Guardrails system flow:

I. Input guardrails validate intent and age appropriateness

II. Factual guardrails ensure scientific correctness

III. Reasoning guardrails enforce simple, structured explanation

IV. Safety guardrails confirm neutral and safe language

Final Output:

I.Correct

II.Simple

III.Grounded

IV. Safe

V.Well-structured

Why Guardrails-Driven AI Matters

1. Higher Answer Quality

Each guardrail focuses on a specific risk dimension.

2. Early Failure Prevention

Issues are prevented or corrected, not just scored afterward.

3. Production-Ready AI

Guardrails operate before deployment and at runtime.

4. Trustworthy AI Systems

Independent rails reduce hallucination, bias, and inconsistency.

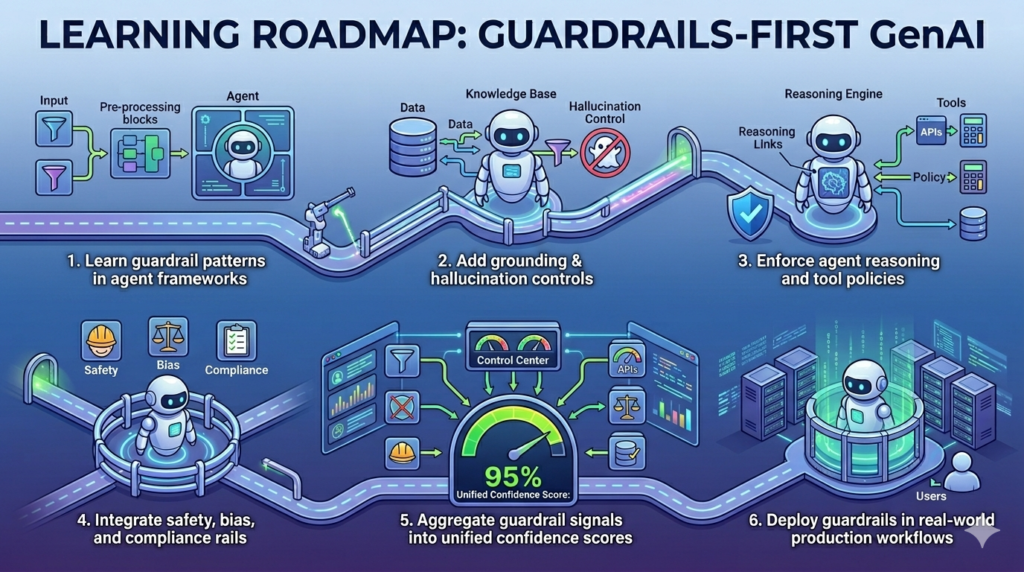

Learning Roadmap: Guardrails-First GenAI

1. Learn guardrail patterns in agent frameworks

2. Add grounding & hallucination controls

3. Enforce agent reasoning and tool policies

4. Integrate safety, bias, and compliance rails

5. Aggregate guardrail signals into unified confidence scores

6. Deploy guardrails in real-world production workflows

The Takeaway

Guardrails transform AI from:

“Just give an answer”

to

“Give a correct, grounded, safe, well-reasoned, and reliable answer.”

This is how enterprise-grade and trustworthy GenAI systems are built today.

Want to Learn More on Generative AI Guardrails?

Tutorials:

https://www.miquido.com/ai-glossary/what-are-guardrails-in-ai/

Acknowledgements

Dr. Basavaraj Sharana Patil Ph.D

BPRF

Disclaimer: Information synthesized from public research, tools, and industry practices. Credit to respective creators.